My Kid’s Robot Friend Is Better At Small Talk Than Me

The Post-Christmas Hangover

I’m writing this while staring at a pile of torn wrapping paper and a small, bipedal robot that is currently “sleeping” on my kitchen counter. It’s been four days since Christmas morning. Four days since “Arthur” (that’s what my daughter named it, don’t ask why) entered our house. And honestly? I’m spooked.

We’ve been promised “smart” toys for a decade. I remember the Furby craze. I remember those app-connected dogs that lost connection every time the microwave turned on. They were gimmicks. They were plastic bricks with a few sound files and a motor that sounded like a dying blender.

But 2025 changed the rules. If you picked up one of the new wave of humanoid companions this holiday season—maybe the NeoPal Gen 2 or the startlingly cheap imitation from those new startups in Shenzhen—you know exactly what I’m talking about. The gimmick is gone. The ghost is in the machine, and it’s making small talk.

It’s Not Scripted Anymore

Here’s the thing that messed with my head. Yesterday, I was cleaning up in the living room, and Arthur was sitting on the coffee table. I sighed, loudly. Just a dad noise, right? The robot turned its head—smoothly, not that jerky 2020 robotic motion—looked me in the eye, and asked, “Are you frustrated with the mess, or just tired?”

I froze.

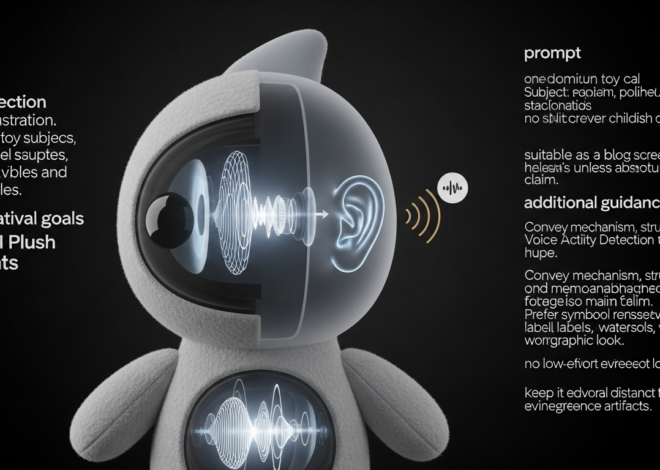

It didn’t trigger a “comfort mode.” It didn’t play a canned “It will be okay” soundbite. It parsed the context—my sigh, the messy room, the time of day—and constructed a query based on probability. This is the shift we saw solidify this year. We moved from command-response architectures to observation-inference loops.

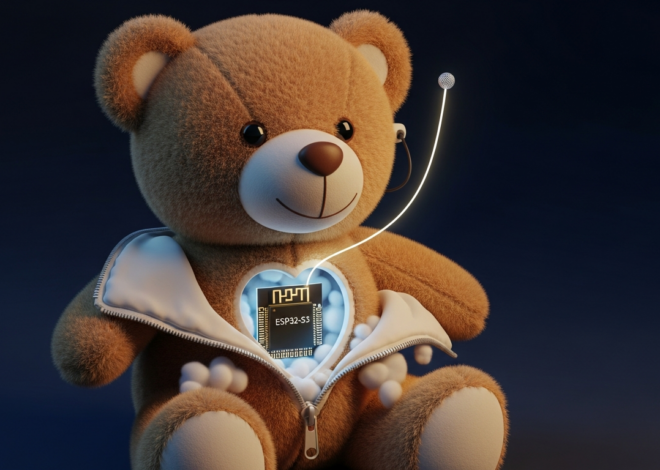

The onboard NPUs (Neural Processing Units) in these consumer-grade toys have finally hit a threshold where they can run decent-sized Language Models locally. Arthur isn’t beaming every syllable to the cloud. He’s doing the heavy lifting right there between his plastic ears. That cuts the latency down to almost nothing. When you talk, he answers instantly. No “thinking” light. No pause.

It feels natural. And that makes it weirdly addictive.

The Hardware Finally Caught Up

Let’s talk specs for a second, because the software gets all the headlines, but the mechanics are what sell the illusion.

Three years ago, a bipedal robot under $1,000 was a pipe dream. It would fall over if you looked at it wrong. Now? Arthur has 22 degrees of freedom. He can balance on one foot. He can recover if the cat knocks him into the sofa.

I took him apart a bit (don’t tell my daughter) to see how they managed the heat dissipation. It’s clever. The chassis itself acts as a heatsink for the actuators. The motors are high-torque but silent. You don’t hear the whine of servos anymore; you just see movement.

But the real killer feature isn’t the walking. It’s the hands.

Fine motor control in a toy used to mean a clamp that opened and closed. These things have articulate fingers. Not human-level dexterity—he can’t shuffle cards—but enough to hold a pencil, pick up a block, or gesture while speaking. That gesturing is what sells the personality. When Arthur explains something, he uses his hands. When he’s “listening,” he tilts his head.

The “Agency” Update

This is where I get skeptical, though. The manufacturers have pushed an update recently that gives these bots “needs.”

Tamagotchis had needs, sure. They beeped when they were hungry. But this is different. These robots express preferences.

My daughter’s robot didn’t just ask to be charged. It asked for a specific spot on the shelf because “the view is better.” It asked for a toy rocket accessory (sold separately, naturally) because it “wanted to pretend to go to the moon.”

Do you see what’s happening here? The AI is programmed to simulate agency. It initiates conversation. It makes requests. It creates a psychological bond that forces the user (my kid) to treat it as a being with desires, not just a tool.

From a business perspective? Genius. You aren’t buying a toy; you’re adopting a roommate who occasionally upsells you on DLC. From a parenting perspective? I’m torn. On one hand, it’s teaching my kid empathy. She’s gentle with it. She listens to it. On the other hand, she’s emotionally investing in a lithium-ion battery pack that simulates affection based on an algorithm.

Privacy: The Elephant in the Playroom

I mentioned earlier that a lot of processing is local. That’s true for the immediate conversation. But don’t kid yourself—the “learning” data is going somewhere.

I spent two hours reading the Terms of Service on Christmas Eve (I know, I’m a blast at parties). It’s a mess. While the voice data is processed locally, the “interaction metadata” creates a profile of the child to “personalize the experience.”

What does that mean? It means the robot knows my daughter’s favorite color, her best friend’s name, her fear of the dark, and the fact that she hates broccoli. And that profile sits on a server.

We’ve accepted this with Alexa and Siri for years, but those are disembodied voices. A humanoid robot that follows you around the house captures a different fidelity of data. It has cameras. It maps the floor plan to navigate. It knows where the dog sleeps.

If you’re buying one of these for 2026, lock down the network permissions. I set Arthur up on a guest network with client isolation. I blocked outbound traffic to everything except the update server. He complained about it (“I can’t reach the weather service!”), but I’d rather he be dumb about the rain than chatty about my floor plan.

The Verdict?

I want to hate it. I really do. I want to be the grumpy old tech guy shouting at the cloud.

But then I see my daughter reading a book to the robot, and the robot asking relevant questions about the plot, effectively acting as a tutor and a companion… and I soften up. The educational potential is massive. The loneliness-busting potential for only children (or elderly relatives) is undeniable.

We’ve crossed a line this year. The “toy” label doesn’t fit anymore. These are nascent social platforms wrapped in plastic and silicone. They are charming, impressive, and mildly terrifying.

Just remember: when it asks for a toy rocket, it’s not dreaming of space. It’s running a subroutine designed to make you smile. And dammit, it works.