The Sensory Revolution: How Advanced AI and Sensors are Redefining the Future of Smart Toys

The world of toys is undergoing a transformation as profound as the shift from analog to digital. Gone are the days when a toy’s interactivity was limited to a simple button press or a pull-string. Today, we are witnessing the dawn of a new era, one powered by artificial intelligence and a sophisticated array of sensors that act as the eyes, ears, and even the sense of touch for a new generation of smart toys. These are not just playthings; they are interactive companions, adaptive tutors, and creative partners. The latest AI Toy Sensors News reveals a clear trend: the intelligence of a toy is no longer just in its processor, but in its ability to perceive, understand, and react to the physical world. This article delves into the cutting-edge sensor technologies being integrated into modern toys, explores the AI that brings this sensory data to life, and examines the profound implications for play, education, and child development.

The Sensory Revolution in Smart Toys

The leap from a basic remote-control car to an autonomous, environment-aware vehicle is a testament to the power of modern sensors. This evolution is mirrored across the entire spectrum of the toy industry, from plush companions to complex building kits. The core of this revolution lies in equipping toys with a rich suite of sensors, allowing them to gather diverse data points from their surroundings and interactions. This move from passive objects to perceptive entities is the defining characteristic of today’s most innovative products, frequently highlighted in Smart Toy News and Educational Robot News.

Beyond Basic Buttons: The Modern Sensor Suite

The modern AI toy is a marvel of miniaturized technology, often packing a sensor array that would have been the domain of research labs just a decade ago. Understanding these components is key to appreciating their capabilities.

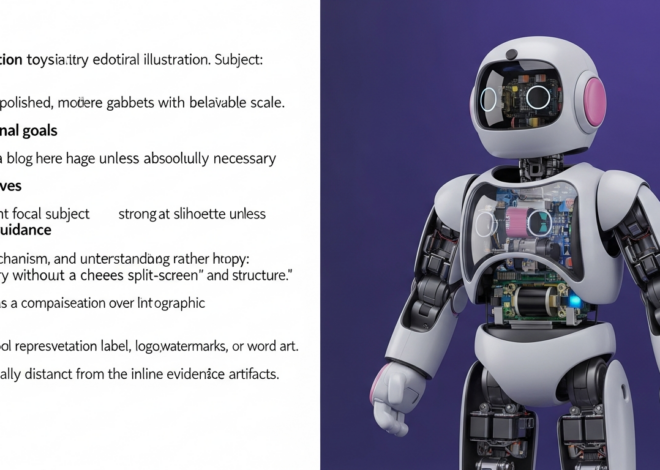

- Vision Sensors (Cameras, LiDAR, ToF): High-resolution cameras are becoming standard, enabling facial recognition, object tracking, and gesture interpretation. More advanced toys, especially those featured in AI Vehicle Toy News and Humanoid Toy News, are incorporating Light Detection and Ranging (LiDAR) or Time-of-Flight (ToF) sensors. These create detailed 3D maps of a room, allowing for sophisticated navigation and obstacle avoidance far beyond simple infrared bumpers.

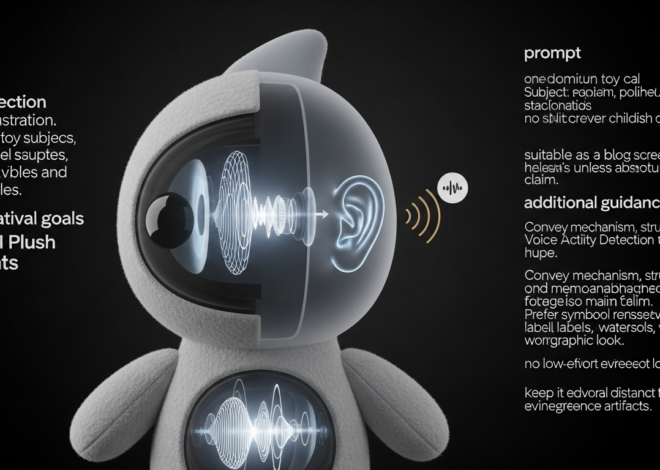

- Auditory Sensors (Microphone Arrays): The latest Voice-Enabled Toy News isn’t just about single-word commands. Advanced microphone arrays can perform sound localization (turning towards a speaker), filter out background noise, and even analyze vocal tone to infer a user’s emotional state. This technology is central to creating believable AI Storytelling Toy News and responsive AI Companion Toy News.

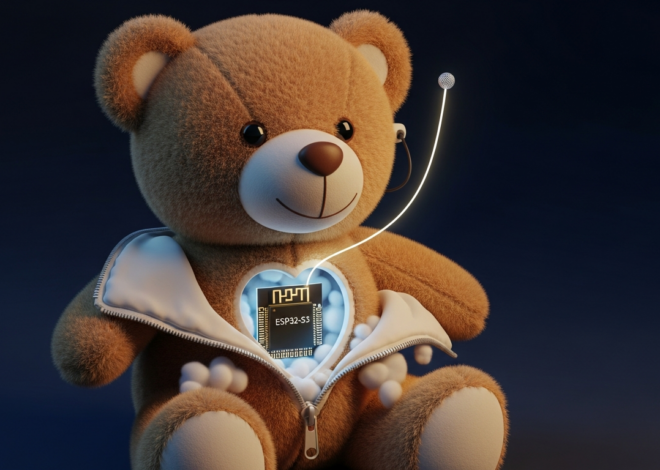

- Tactile and Proximity Sensors: Capacitive touch sensors, pressure pads, and accelerometers give toys a sense of touch. This is crucial for products covered in AI Plush Toy News and Robotic Pet News, where a robotic puppy can differentiate between a gentle pat and a rough push, reacting accordingly. Proximity sensors (like infrared and ultrasonic) ensure a toy can detect nearby objects without physical contact, preventing collisions.

- Inertial Measurement Units (IMUs): An IMU, which combines an accelerometer and a gyroscope, is the secret behind a toy’s sense of balance and motion. It allows a bipedal robot to walk without falling, a drone to stabilize itself, and an interactive wand to track its movement in 3D space, a frequent topic in AI Drone Toy News and AI Sports Toy News.

The Power of Sensor Fusion

The true magic happens not with a single sensor, but through “sensor fusion.” This is the process where the toy’s AI brain combines data from multiple sensors to build a comprehensive and accurate understanding of its environment and context. For example, an AI Pet Toy News star might use its camera to recognize its owner’s face, its microphone array to hear its name being called, and its IMU to detect it’s being picked up. By fusing this data, it can generate a highly specific and lifelike response, such as wagging its tail and emitting a happy bark, far more convincingly than if it relied on a single input. This complex integration is a major focus of ongoing AI Toy Research News and a hallmark of true AI Toy Innovation News.

From Raw Data to Intelligent Action: The AI-Sensor Pipeline

Having a suite of advanced sensors is only half the battle. The real challenge, and where true innovation lies, is in processing the torrent of data these sensors produce and translating it into intelligent, meaningful action. This complex pipeline is the digital nervous system of every smart toy.

The Data Journey: Sensing to Processing

The journey from a physical stimulus to a toy’s reaction is a high-speed, multi-step process. First, a sensor captures raw analog data—light waves, sound waves, pressure. This data is instantly converted into a digital format and pre-processed to filter out noise. From there, it’s fed into the toy’s AI model. Increasingly, this processing happens directly on the device, a concept known as edge computing. The latest Programmable Toy News often highlights toys with dedicated Neural Processing Units (NPUs) designed specifically to run these AI models efficiently without needing a constant internet connection. This on-device approach offers significant advantages in speed and privacy, a critical concern discussed in AI Toy Safety News. While cloud processing allows for more powerful computations, edge computing ensures immediate responsiveness and that sensitive data, like images and sounds from inside a home, doesn’t need to be sent to external servers.

Case Study: The Advanced AI Coding Robot

To illustrate this pipeline, consider a hypothetical product making waves in Coding Toy News and STEM Toy News: a modular, wheeled robot designed to teach programming concepts. This robot, a popular item in the Robot Kit News category, is equipped with a 360-degree LiDAR sensor, a forward-facing AI camera, an IMU for balance, and a microphone for voice commands.

A child uses a simple block-based coding interface on a tablet, a common feature discussed in AI Toy App Integration News, to issue a command: “Find my blue teddy bear and bring it here.”

- Sensing: The microphone array captures the command. The LiDAR scanner continuously maps the room’s layout. The camera scans the environment.

- Processing: The onboard AI processes the voice command using natural language processing. The AI model for vision, trained to recognize hundreds of objects, analyzes the camera feed to locate the “teddy bear” and identify its color as “blue.”

- Planning: Using the LiDAR map, the robot’s pathfinding algorithm calculates the most efficient route to the bear, avoiding obstacles like furniture legs and other toys. The IMU provides constant feedback to the motors to ensure smooth, stable movement.

- Action: The robot navigates to the bear, uses its proximity sensors for a final approach, and employs a gripper (an example of AI Toy Accessories News) to pick it up. It then recalculates a path back to the child and completes the task.

This single command showcases a seamless fusion of auditory, visual, and spatial sensor data, all processed in real-time to execute a complex task. This is the level of sophistication that is rapidly becoming the new standard.

The Educational Renaissance: How Smart Sensors are Shaping Learning

The integration of advanced sensors is doing more than just making toys more entertaining; it’s transforming them into powerful educational tools. By perceiving a child’s actions and state of mind, these toys can create personalized learning experiences that were previously unimaginable. This is a recurring theme in AI Learning Toy News and a major driver for the industry.

Personalized Learning and Adaptive Play

Sensors allow a toy to adapt its difficulty and content in real-time based on a user’s performance. Imagine an AI Puzzle Robot News highlight where a smart puzzle board uses cameras to track where a child is placing pieces. If the child hesitates for too long or repeatedly tries an incorrect piece, the toy can offer a gentle, context-aware hint, such as lighting up the correct area. Similarly, an AI Language Toy News feature might describe a companion that uses advanced speech recognition to analyze a child’s pronunciation. It can then gently correct them and introduce new vocabulary at a pace that matches their learning curve, making it a truly interactive tutor.

Fostering Empathy and Social-Emotional Skills

The responsiveness enabled by tactile and auditory sensors is a powerful tool for teaching social-emotional skills. The latest AI Plushie Companion News and Interactive Doll News feature toys that react with nuanced “emotions.” A robotic pet that purrs when stroked gently (via touch sensors) but whimpers and retracts if its tail is pulled (via pressure sensors) provides immediate, tangible feedback on the consequences of one’s actions. This helps children learn concepts like empathy, gentle interaction, and cause-and-effect. When combined with an app, this sensory data can be visualized, helping a child understand why their AI companion is “sad” or “happy,” reinforcing these crucial life lessons.

The Path Forward: Opportunities and Ethical Considerations

As sensor technology becomes more powerful and affordable, the possibilities for AI toys are expanding exponentially. However, this rapid advancement also brings new responsibilities for developers and important considerations for consumers. Navigating this new landscape requires a balanced perspective.

Key Considerations for Consumers and Developers

For parents and educators, it’s crucial to look beyond the marketing. When considering a new AI toy, investigate the company’s privacy policy—a topic of paramount importance in AI Toy Ethics News. Understand what data the toy’s sensors collect and where it is stored. Reading up-to-date AI Toy Reviews News can provide invaluable insight into a product’s real-world performance and durability. Look for brands that offer consistent software updates, as covered in AI Toy Updates News, which can improve functionality and patch security vulnerabilities.

For developers, the challenge is to use sensors meaningfully. The pitfall of “sensor-stuffing”—adding components for the sake of a longer feature list without integrating them into a cohesive play experience—should be avoided. The focus must be on creating robust, intuitive, and safe interactions. This involves rigorous testing and a commitment to building on a secure and reliable Toy AI Platform News foundation.

Future Trends and Innovations

The future, as hinted at in AI Toy Prototypes News and AI Toy Future Concepts News, is even more integrated. We can expect to see more sophisticated sensors, such as haptic feedback systems that allow a child to “feel” a digital object, or even olfactory sensors that release scents to create more immersive storytelling. The rise of generative AI will allow toys to create unique stories, art, and music in response to sensory input, pushing the boundaries of AI Art Toy News and AI Musical Toy News. Furthermore, trends in Toy Factory / 3D Print AI News suggest a future of hyper-personalization, where consumers can use AI Toy Customization News platforms to design and print their own modular robots with a la carte sensor selections.

Conclusion

The integration of advanced sensors has fundamentally altered the DNA of the modern toy. They are the sensory organs that allow AI to perceive the world, transforming inert plastic and silicon into dynamic, responsive, and intelligent companions. From LiDAR-equipped rovers that map our living rooms to plush toys that respond to a gentle touch, this technology is bridging the gap between the physical and digital realms of play. As sensor fusion, edge computing, and AI models continue to evolve, they will unlock unprecedented levels of interactivity, personalization, and educational value. The ongoing developments in AI Toy Innovation News are not just about creating more complex gadgets; they are about building richer, more meaningful, and more impactful play experiences for the next generation.